Reduce Workload and Improve Feedback... There's Got to Be A(I) Catch!

This article was originally written by Tom Southerden and Charlotte Clifford for the UTCN internal school newsletter. It is republished here with their permission as an honest, unedited account of what it's actually like to adopt AI marking in a secondary school.

Teachers are leaving the profession in hoards, citing workload and burnout as the crux — but has AI arrived just in time to save our skilled staff, is it here to steal our jobs, or is it just another (rather expensive) fad?

The Marking Mountain

It comes as no surprise that AI marking tools have exploded onto the market for teachers — the eternal issue with workload is one that we have not yet seemed to master. Routines, centralised curriculums, and live marking feel like you have won the teaching lottery until it is Spring 2 and you have 150 exam papers slammed onto your desk, barely able to see your classes over the impending marking load. Above that, there's no doubt that essay subjects require a greater deal of time than shorter answer questions.

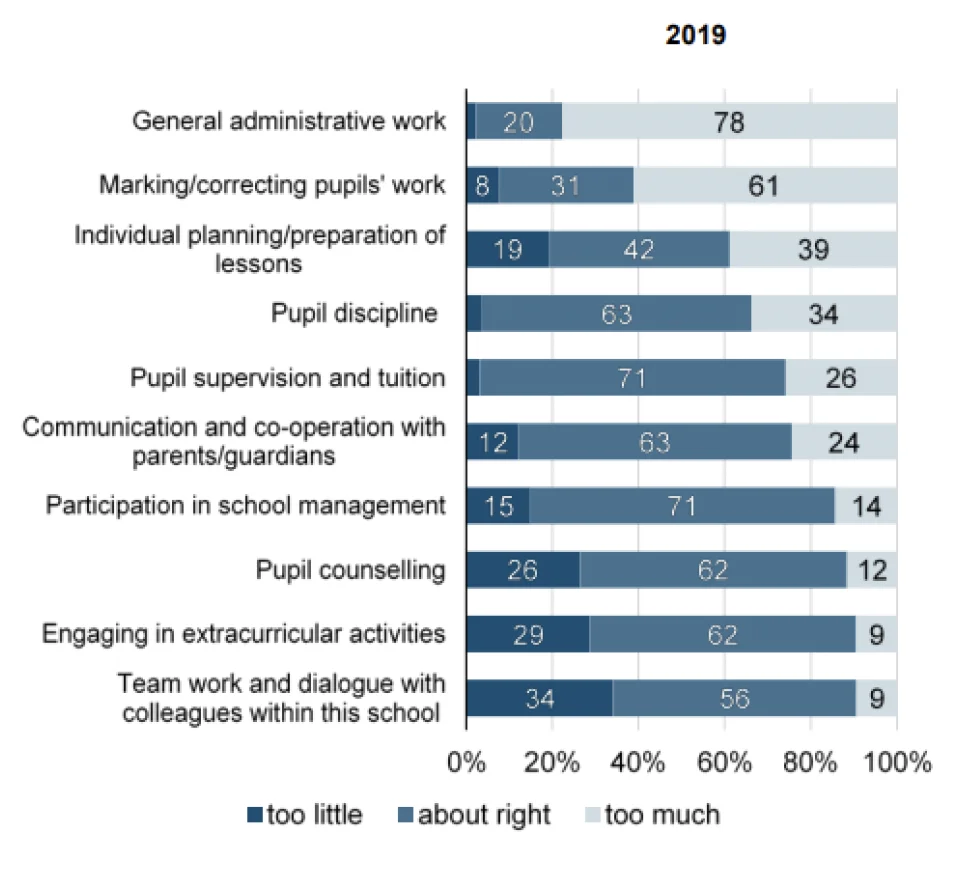

The teacher workload survey (DfE, 2019) reported that 61% of teachers felt that they spent too much time marking pupil work. Research has shown that intensive periods of marking can decrease the quality of classroom provision during these periods, lead to inconsistency in marking through teacher fatigue and bias which in turn can lead to increased staff absence with significant cost implications. This alone however cannot be the sole motivation for the introduction of AI marking — entrusting one of our most valuable insights into student progress to our robot overlords is a huge risk!

DfE Teacher Workload Survey, 2019

Having participated in the AQA marking series last summer, Charlotte found it took roughly 15 minutes to mark each of the exam papers in a batch of Literature Paper 1. That's a mere 10 papers completed in 2 and a half hours. After a full day of teaching, that would leave even the most experienced teacher bleary eyed and unable to bear staring at the screen any longer. The benefit of marking for AQA? The additional pay day when you reach your quota! The motivation of marking mocks is not quite the same and, using our rudimentary maths skills, equates to 37.5 hours of additional marking outside of teaching just in Spring 2. An entire working week for those outside of the profession.

Finding the Right Tool

In the new era of AI tools and companies boasting AI enhanced features, finding the perfect company to use for marking trials was a challenge. A plethora of AI marking companies exist however most are only able to analyse typed answers and are often only trained with a limited knowledge base. This meant that UTCN needed to find a company who included transcription as a part of their process alongside a robust assessment model. We needed an AI model with a deep contextual understanding and ever improving knowledge base, its development must be evidence and expert driven and importantly those experts must be responsive to our feedback as frontline teachers and subject leaders. Top Marks AI became just this solution which has in turn led to the English Department halving their marking load...

The Trial: Trust but Verify

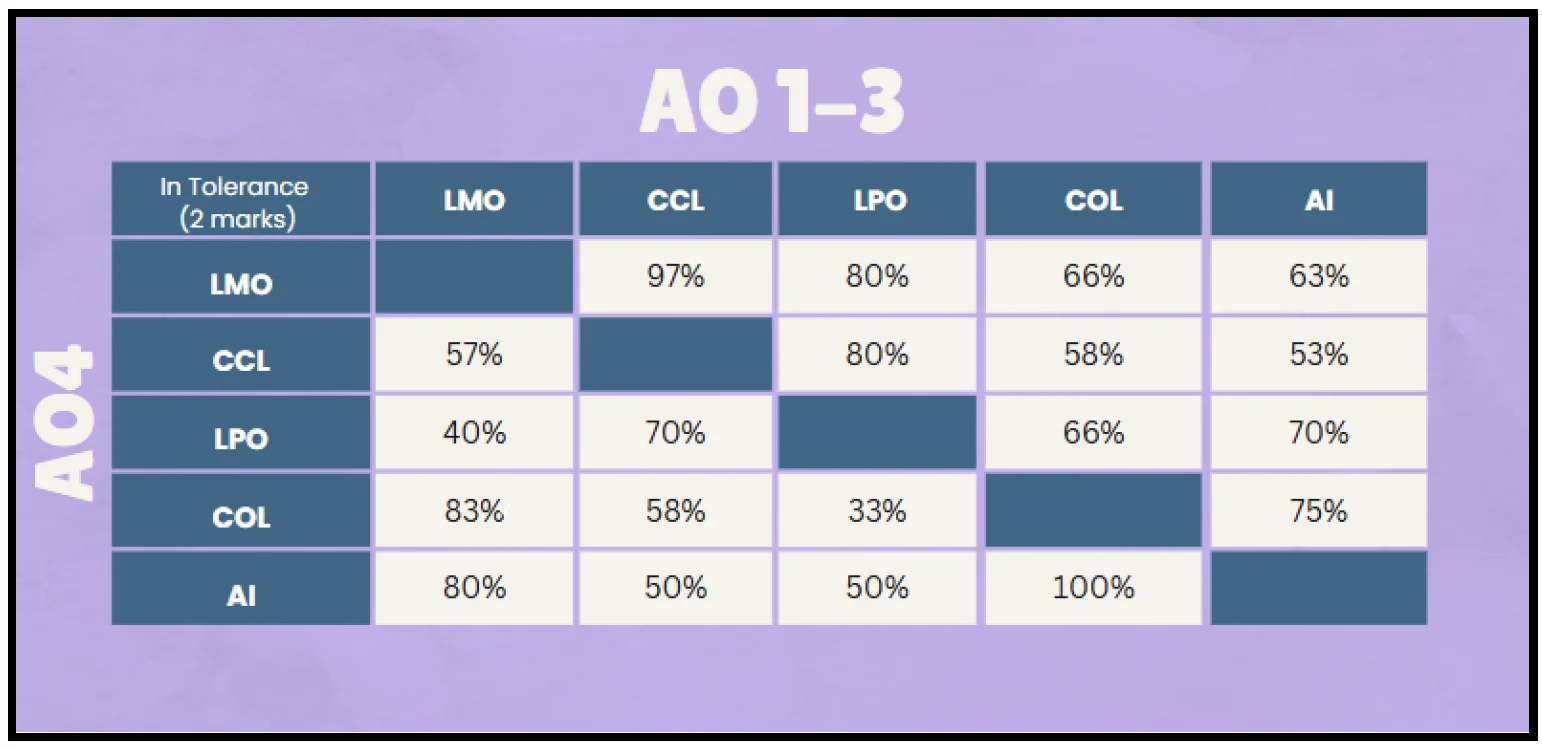

The process has not been simple. We began by trialling AI marking alongside mocks that we were marking ourselves. As much as we looked at Top Marks with bright, shining eyes, we thought that perhaps it was too good to be true. The first moderation process involving 5 members of staff marking the same work, as well as AI, identified that AI was within tolerance of the marks that we had given students. The most shocking revelation was the degree of tolerance we had with one another! We very quickly identified that as human beings, there were discrepancies within our marking and, if AI fit within tolerance, it effectively worked just as well as us (if not better!)

The most shocking revelation was the degree of tolerance we had with one another! We very quickly identified that as human beings, there were discrepancies within our marking.

UTCN's internal moderation: AI (rightmost column) within tolerance of human markers across all assessment objectives.

Where AI was relatively in line with us for Literature Paper 1 — give or take it not understanding rubric infringements especially well — it was worlds apart when marking creative writing questions.

It was wildly inaccurate and we figured this was due to how open to interpretation the mark scheme was without the fact and context of a Literature text to guide it. We could not justify the inaccuracies, especially at such a crucial stage in the academic careers of our students.

The Rough Edges

Of course AI models are not infallible, even a well trained AI can occasionally hallucinate, be too forgiving or in some cases harsh in its marking. To some degree transcription was also a factor affecting the performance of the AI. In most cases of poor handwriting — some which even we struggled to interpret — the model would make a good attempt at transcribing meaningful text but would occasionally make wild assumptions or include words which had not been fully crossed out. The use of an asterisk to denote insertion of an additional paragraph or sentence at the end of the script or other "student markings" were also not fully understood but as the model has developed, so too has its ability to deal with the often challenging format of answers our students give.

What Won Us Over

What made Top Marks a particularly attractive offer for us was their approach to model training and feedback. Each model is trained to mark a specific question with moderated examples, mark schemes, examiners notes and further expert context and tweaking. More recently, Top Marks were granted access to over 3,000 essays marked by Chief Examiners which they were able to run through their programme to teach, tweak and tailor it to be as in line with marking expectations as possible. Following this, Cambridge Assessment checked the accuracy of their marking tools and determined it to have a 0.94 correlation (1 being absolutely perfect). This approach to model training means a more accurate output than most competitors, not just in numerical marks but meaningful written feedback too!

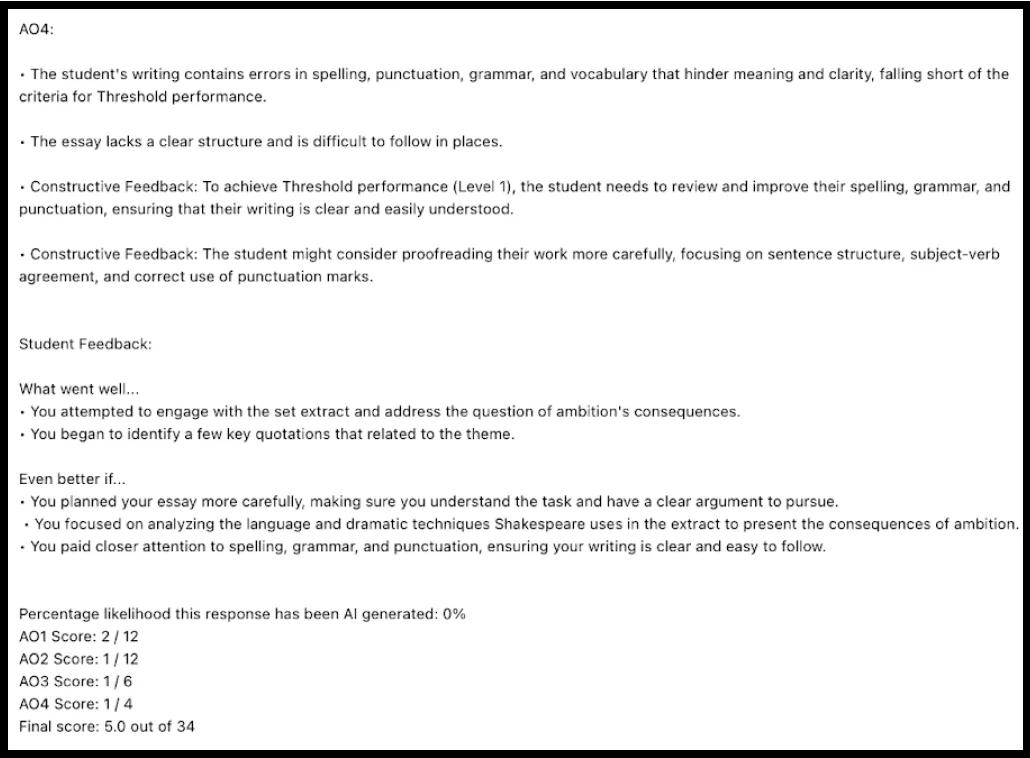

After marking each essay you are provided with in-depth AO level feedback on each student's answer alongside student friendly "What Went Well" and "Even Better If" statements (which make for great DPR collaborations). This feedback can then be run through your favourite GPT to generate personalised intervention or extension for your pupils, though even this feedback alone is a powerful tool for our teachers and pupils. A teacher reading this feedback — or analysing the data output — is quickly able to identify common misconceptions or recognise potential knowledge gaps. The aim of AI marking is not just to reduce workload but improve how we use assessment to inform our planning and teaching.

Example feedback output: AO-level marks, teacher notes, and student-friendly WWW/EBI.

The Logistical Learning Curve

This all sounds promising but during this process, Charlotte was at the heart of logistical nightmares. Students writing multiple answers on the same page and AI not being able to separate answers. Not realising that there were two An Inspector Calls questions and having to separate exam scripts into two piles before scanning and inputting data. Failing to communicate with the exam team that the lined paper should not be stapled therefore leading to hours of time spent meticulously removing staples from paper so it would go through the scanner. The scanner is breaking. The scanner is not sending an email. The list, unfortunately, continues. All a steep learning curve to get right but all for Top Marks to turn around and make our lives easier once again.

A Partnership, Not Just a Product

Luckily for us, Top Marks wanted to hear our feedback: the good, the bad, the ugly. In doing so we forged a relationship with them that led to additional features being crafted to make the platform more accessible for everyone. We have progressed from the arduous task of renaming every file we scan through in order to attribute the paper to a student, to now having student barcodes, auto attributing the work, making it easy to download and copy into our trackers. Feedback was made available as a spreadsheet for whole groups alongside individual feedback allowing for more rapid analysis and more impactful learning conversations. Alongside the platform, the model has continued to develop and grow, responding to our needs and recognising opportunities and threats, for example developing the AI to recognise where the transcription may have been incorrect or unclear.

New models have been developed which are far more capable of handling the unknowns of creative writing, comparing poetry and English Language alongside further improvements to their other Humanities subjects. We are now at a stage where we are trialling their Beta software, an entire exam paper created, crafted and ready to scan in so that all of the leg work is done by AI which, in turn, will reduce the time spent scanning and filtering through data. Concurrent to this, we are also now expanding our trials into Geography and History with other subjects to follow and the results (so far) look very promising.

The result: Despite the chaos we have emerged with wins — an AI marking checklist for the logistical side, swift department moderations and, for the most part, a 50% reduction in our marking load.

A victory for AI marking and a victory for teacher workload. After all, there's no point crying over stapled paper (or whatever the saying is!)

Tom Southerden and Charlotte Clifford are teachers at UTCN (University Technical College Norfolk).

We use cookies to enhance your experience. By continuing to visit this site you agree to our use of cookies. Learn more in our Cookie Policy.